ChatGPT’s disruption of Education and College Admissions: A Tool or Cheat Code

7 minute read

In May of 2022, CANTA, the official magazine of the University of Canterbury, New Zealand, published an interview with the Essay Witch. This was the pseudonym chosen by a UC student in order to protect their identity. “I don’t think of this as cheating,” they said while explaining how they had used an AI chatbot to transform their grades from Cs to Bs (and even As) over the course of the academic year. The student justified the practice after closely reading the university’s rules on academic honesty, which say that you’re not allowed to submit someone else’s work as your own. “Well,” they concluded, “it’s not somebody, it’s AI.”

This student’s experience distills the dilemma of generative AI in academia to its essence. Is it a helpful tool or a cheat code? Can it help students and teachers reach new depths of inquiry or will it deprive them of the hard work required to develop mastery of their subjects? And most existentially, what counts now for human creativity now that ubiquitous algorithms can convincingly recreate it at the click of a button?

Do your own research through Polygence!

Polygence pairs you with an expert mentor in your area of passion. Together, you work to create a high quality research project that is uniquely your own.

As students, teachers, and administrators grappled with these questions in advance of the new academic year in September, the ground beneath them continued to shift. The Essay Witch likely used GPT-3 for their papers over the course of the 2021-2022 academic year. Shorthand for Generative Pre-trained Transformer 3, GPT-3 was released to the world in May 2020 by OpenAI, an artificial intelligence research lab based in San Francisco, California. As the number suffix implies, it was the third iteration of their autoregressive language model, which uses deep learning to respond to queries with human-like language. The autoregressive element is key. It means that GPT models continuously teach themselves, constantly improving the fluency and accuracy of their responses as inputs increase. The more they’re used, the better they get.

The acceleration of these improvements is already tearing through the fabric of academia, which doesn’t have a great track record in responding quickly to cultural and technological change. In an Atlantic essay provocatively titled “The College Essay is Dead,” journalist and former professor Stephen Marche predicted the delay in an effective response from higher ed administration will be 10 years: “two years for the students to figure out the tech, three more years for the professors to recognize that students are using the tech, and then five years for university administrators to decide what, if anything, to do about it.”

In the few months since the interview with Essay Witch was published, generative AI itself already lived another generation. ChatGPT, a model built on top of GPT-3, was released in November 2022 and its ability to produce human-like responses to sophisticated questions–including writing long-form college-level essays–immediately caused hand wringing from teachers at every level. Yet, while both experienced professors and academic integrity tools like Turnitin have pointed out certain tells that allow us to discern AI generated text from real student work, it’s clear this will be a constantly moving target. Indeed, these issues are set to intensify dramatically in early 2023, when OpenAI is slated to release GPT-4. This generation will have hundreds of billions of parameters, considerably more than ChatGPT. In layman's terms, this means that all of those little tells that AI made a specific text–fabricated sources, clumsy syntax, lack of throughline or argument–will diminish with each generation of the model. That is, if they’re not already undetectable.

The debate in 2023 may focus on what constitutes plagiarism and how we identify it. But the implications amount to a total paradigm shift for education. So, what does this mean for academic work, college applications, and our conceptualization of human culture more broadly?

Impact on Academia

On the shortest time scale, this is going to be a rough year for teachers, especially those working with high school and college students. A blog post from Sora Schools, a fully-online middle and high school, noted that every student now has access to a “tool that can effortlessly complete their homework–even the most arduous book reports or calculus assignments–at an A level, and the teacher will likely never know.” Given the depth of this disruption teachers are suddenly faced with a generational challenge. What are they to do?

The downsides of this disruption are obvious. First and foremost, AI now enables all students to “cheat”, easily and with little probability of detection. Whatever your opinion of grade inflation in secondary schools, AI chatbots have the potential to make differential assessment nearly impossible. If every student submits a unique A-level essay with no traceable signs of traditional dishonesty, what’s a teacher to do?

This quandary then cascades to other issues of mastery and even competency. If students are able to fake their way through historically challenging classes, how will they be ready to tackle problems in their careers their degrees should have trained them to solve? Disputes like the one that unfolded last fall at NYU–where 82 students filed a petition alleging a respected chemistry professor’s class was simply too hard to pass–might never have come to pass if those failing students had simply turned to AI to pass. But if aspiring med school students cheat their way through organic chemistry problem sets, what risks would those people pose as physicians to their patients?

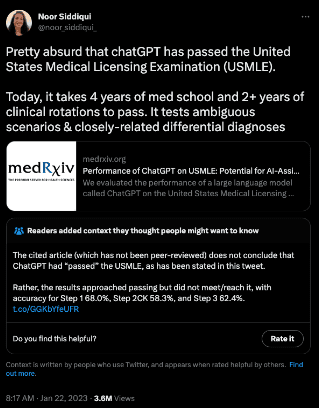

And the potential risks run much deeper than just mastery of certain high-skill fields. By providing everyone sophisticated answers to coursework in every field, AI provides easy shortcuts past the hard work required to learn to write well. If GPT-3 can already pass final MBA exams at top business schools like University of Pennsylvania’s Wharton, what is the point of learning how to do the work yourself? The most efficient students will simply learn how to employ these tools to write rather than writing the actual exams for themselves.

This is perhaps why many secondary schools and colleges are making moves to ban AI tools as part of revisions to their honor codes. In early January of this year, Princeton University senior Edward Tian took the internet by a storm when he released GPTZero, an app that detects whether a piece of text has been written by AI. The tagline to his app is - “Humans deserve to know the truth”. In the first week of its release, it was used by more than 30,000 university and high school educators to detect AI plagiarism in their students’ work.

From the L.A. Unified School District to New York City Public Schools, administrators see AI as an existential threat to the culture of academic honesty. ChatGPT simply “doesn’t help build critical-thinking and problem-solving skills,” said Jenna Lyle, the deputy press secretary of the NYC Department of Education, in a recent press release. This would seem to be a comprehensive rebuttal to the logic of the Essay Witch. AI might be a tool, but one might say the same of steroids, and we can see the corrosive effect those have on the spirit of competition in sports.

But analogies only go so far, and unlike performance enhancing drugs, the reach of AI is potentially limitless. Blocking AI sites from school computers might prevent students from accessing them in class, but the rest of their waking lives will increasingly be influenced by AI. Recognizing this, some educators see promise beyond the initial panic.

For one, teachers could put AI to good themselves, taking inspiration from best-in-class pedagogical materials at their fingertips and automating the most time-consuming and menial tasks in order to refocus on student interaction and instruction. The often thankless labor of grading papers or providing feedback for scores of students in introductory classes could be accomplished nearly instantly. Moreover, AI could be trained to provide feedback authentic to the instructors themselves. Consider Character.ai, another chatbot site that allows people to have open-ended conversations with real (and fake) famous figures. The company’s model is trained to converse like famous people based on their history of speech and writing. Why, then, wouldn’t a teacher upload all of their historical feedback to produce an AI bot that gives their style of feedback on first drafts of student papers in a fraction of the time (especially if the students wrote the essays via AI to begin with)?

Your Project Your Schedule - Your Admissions Edge!

Register to get paired with one of our expert mentors and to get started on exploring your passions today! And give yourself the edge you need to move forward!

The potential for positive disruption also extends to ongoing efforts in the academy to diversify curricula and reading lists. Rather than choosing the same textbooks as every year, this could be a perfect time to explore similar but lesser-known publications. Instead of teaching MacBeth, English teachers might turn to Shakespeare’s lesser known sonnets. Political science might turn a brighter light on marginalized communities about whom far less ink has been spilt. Museums could mount exhibitions on more artists of color than white ones. Though the gambit would be that chatbots couldn’t find enough material on more esoteric subjects to produce convincing answers, the effect would be to expose students to a new diversity of artifacts and individuals, accomplishing a long-imagined “canon change” by matter of course.

Most broadly, AI might provide a window of opportunity to rethink school itself, which, by many metrics, is not delivering for this generation of students. In 2020, Yale’s Center for Emotional Intelligence and the Child Study Center discovered a large majority–nearly 75%–had negative feelings about their time at school, something we explore in our white paper on student mental health. If 3 of 4 students are “tired, stressed, and bored” from their time at school, clearly something needs to change. If AI imperils the status quo, perhaps it represents an opportunity for educators and administrators to completely rethink education as a system in society. And if technology caused this crisis it may also bring novel solutions. Companies like Prof Jim can transform textbooks or wikipedia pages into engaging video lessons at the touch of a button, providing more engaging content for a generation that embraces video as a primary means of communication.

Impact on College Admissions

While professors, teachers and administrators take time to weigh these opportunities, high school seniors will still be applying to college. For them the process will be fraught. The complicated, stressful application process often boils down to the quality of the personal essay, a requirement that now amounts to a rite of passage. The goal is to craft something so engaging, but also authentic, that admissions officers will sit up and take notice amidst the thousands of such essays they read each year. So much pressure is contained in these 650 word texts (in addition to reams of supplemental writing) that many college counselors and consultants hang their hat on editing them for maximum effect.

This is precisely why opinion pieces asking “Does ChatGPT signify the end of the application essay?” must be given serious thought. Recent scandals have shown how easy family wealth allows applicants to game the system. Which poses a true moral dilemma for applicants: should they accept the uneven playing field we all know exists, or should they tip things slightly in their favor with the help of widely-available tools like ChatGPT? Forbes Online Magazine showed how simple that approach might be. Consider one of the core Common Application essay prompts: “Some students have a background, identity, interest, or talent that is so meaningful they believe their application would be incomplete without it. If this sounds like you, then please share your story.” In less than ten minutes–”far less time than we hope students would spend composing essays and far more time than most admissions officers spend reading essays”--ChatGPT produced a convincing and compelling text, albeit one that suggested heavy editing by an experienced adult.

While sidestepping the stress and frustration of writing these essays, using AI in this way poses grave consequences. All students must now struggle with the ethical tightrope drawn by the Essay Witch: am I using a tool or am I cheating? Do I stand on moral principles knowing full-well that many, many others are submitting AI generated or enhanced essays? And what of the psychological benefits we know come from focused reflection on their lives that leads to good personal essays?

It may be that these thorny issues lead to a productive disruption of the application process. For many schools, the essay has limited utility; most acceptances are determined by simple calculations of test scores, GPA, and financial qualifications. In situations where essays remain an inflection point, admissions officers could be compelled to make positive changes to their process. After all, what’s the point of poring over thousands of pages of such essays if a significant percentage are fabricated and inauthentic? Perhaps the means for assessing candidates will move closer towards the “holistic” processes to which many schools already aspire.

While schools and admissions offices search for temporary solutions to these issues, they also need to think more proactively about the changes yet to come. In a way, the release of ChatGPT can be considered a clarion call. It’s clear that AI will disrupt academic work in profound ways over the course of the next months and years, making it no longer the purview of only computer scientists or administrators; every student, from emerging engineers to potential poets, will incorporate AI into their studies. This means they will need not only to have an opinion about the uses and abuses of these technologies, but will also need to know how to use them productively and ethically. Thus, it’s up to all of us–students, educators, technologists, and parents–to decide what the future of learning with AI will look like.

Do Your Own Research Through Polygence

Your passion can be your college admissions edge! Polygence provides high schoolers a personalized, flexible research experience proven to boost your admission odds. Get matched to a mentor now!"